- Business & Data Research

- Posts

- Social Media and Mental Health Awareness: Classification Use Case

Social Media and Mental Health Awareness: Classification Use Case

Social Media Dataset using Classification, KNN algorithm, Ensemble Learning

About the dataset :

This dataset was collected via a survey to investigate the relationship between social media usage habits and mental well-being. The study focuses on understanding how platform preference, daily usage time, and interaction patterns (such as checking notifications or engaging in arguments) correlate with user stress levels, sleep quality, and academic performance.

This data is particularly useful for exploring the psychological impact of digital habits, identifying "high-risk" user segments, and building predictive models for mental health trends related to technology.

Context:

The primary goal is to discover which social media habits are linked to high stress or low mood and to promote healthier online behavior

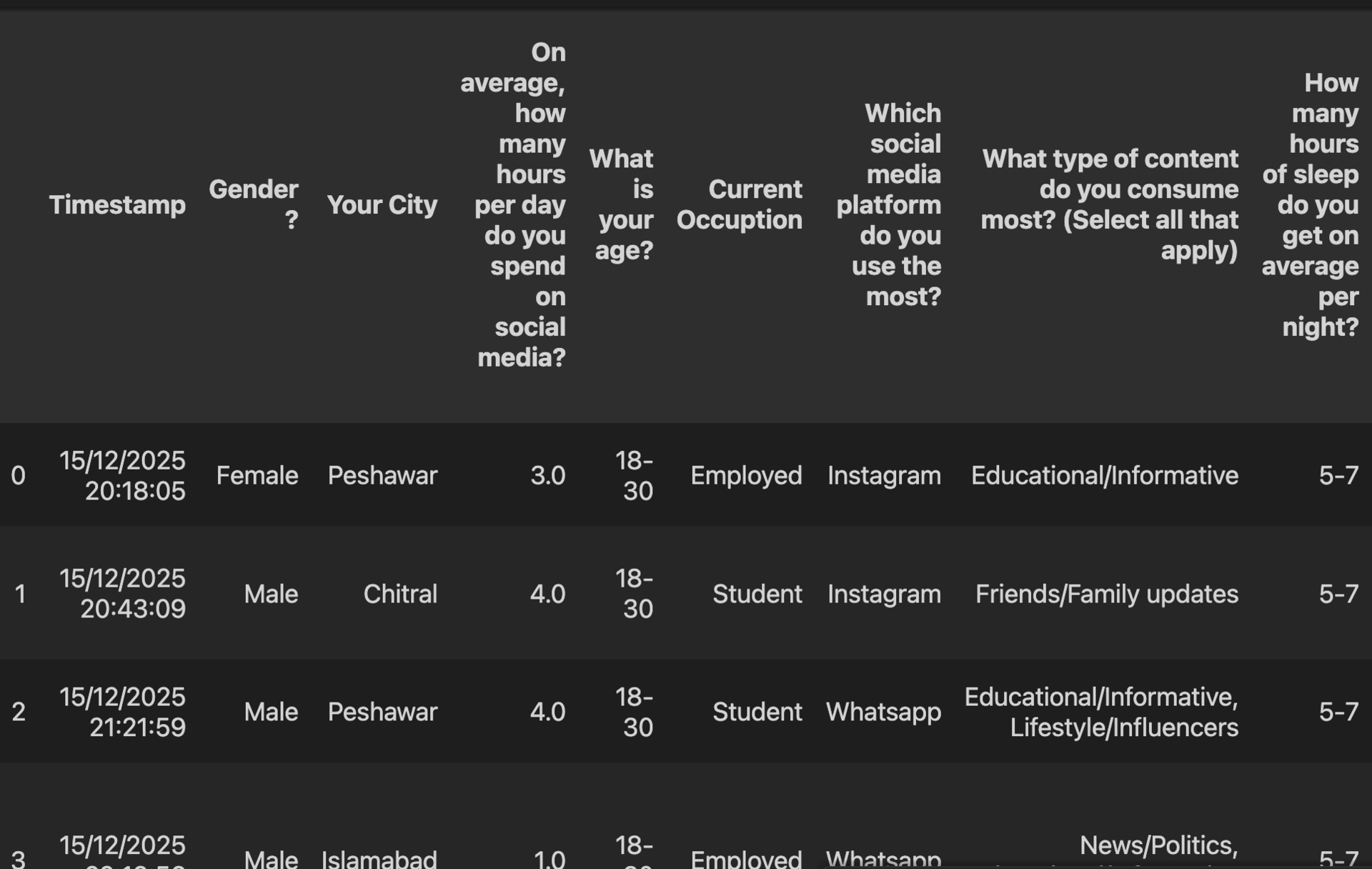

Step 2: Reading the dataset using Pandas:

social_media_df = pd.read_csv('/Users/maheshg/Dropbox/Sample Datasets Kaggle/Social Media and Mental Health.csv')

social_media_df.columns

Index(['Timestamp', 'Gender ?', 'Your City',

'On average, how many hours per day do you spend on social media?',

'What is your age?', 'Current Occuption',

'Which social media platform do you use the most?',

'What type of content do you consume most? (Select all that apply)',

'How many hours of sleep do you get on average per night?',

'Do you use social media right before sleeping? ',

'Do you check social media immediately after waking up? ',

'When you receive a notification while studying, what is your immediate reaction?',

' What is the specific thing about social media that causes you the most stress or anxiety? ',

'How often do you find yourself comparing your life to others?',

'Do you feel "FOMO" (Fear of Missing Out) when you are offline?',

'In a few words, describe how you feel when you see your friends having fun without you on social media.',

'With how many specific people do you interact (DM/Tag) on a daily basis?',

'How would you rate your current daily stress level?',

'What is your current CGPA range? ',

' Why do you usually open social media when you are supposed to be studying? ',

' Do you use social media to escape or forget about your real-life problems? ',

' How often do you get into arguments or heated discussions in comment sections? ',

' How does it affect your mood if a post you made gets fewer likes than you expected? ',

'Have you ever experienced any negative events on social media? (cyberbullying, harassment, hate comments, etc.) ',

'Do you have any medically approved mental disorder?'],

dtype='object')print(social_media_df_copy.columns.tolist())

['Timestamp', 'Gender ?', 'Your City', 'On average, how many hours per day do you spend on social media?', 'What is your age?', 'Current Occuption', 'Which social media platform do you use the most?', 'What type of content do you consume most? (Select all that apply)', 'How many hours of sleep do you get on average per night?', 'Do you use social media right before sleeping? ', 'Do you check social media immediately after waking up? ', 'When you receive a notification while studying, what is your immediate reaction?', ' What is the specific thing about social media that causes you the most stress or anxiety? ', 'How often do you find yourself comparing your life to others?', 'Do you feel "FOMO" (Fear of Missing Out) when you are offline?', 'In a few words, describe how you feel when you see your friends having fun without you on social media.', 'With how many specific people do you interact (DM/Tag) on a daily basis?', 'How would you rate your current daily stress level?', 'What is your current CGPA range? ', ' Why do you usually open social media when you are supposed to be studying? ', ' Do you use social media to escape or forget about your real-life problems? ', ' How often do you get into arguments or heated discussions in comment sections? ', ' How does it affect your mood if a post you made gets fewer likes than you expected? ', 'Have you ever experienced any negative events on social media? (cyberbullying, harassment, hate comments, etc.) ', 'Do you have any medically approved mental disorder?']

# Encode categorical columns

label_encoder = {}

### Scale the data :

scaler = StandardScaler()

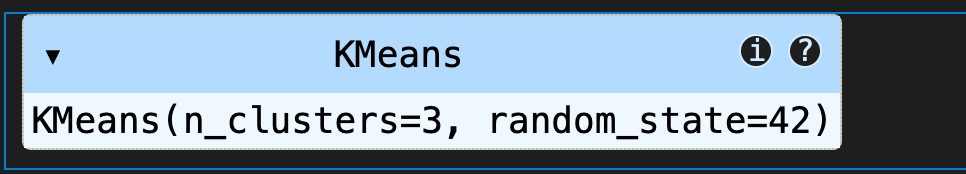

scaled_data = scaler.fit_transform(social_media_cluster)### Run K means clustering :

kmeans = KMeans(n_clusters=3, random_state=42)

kmeans.fit(scaled_data)

social_media_cluster['cluster'] = kmeans.fit_predict(scaled_data)cluster_data = social_media_df_copy[cluster_cols]

cluster_data['cluster'] = social_media_cluster['cluster']# Elbow (k-bend) method, automatic elbow detection

Conclusion:

This suggests using 3 clusters (k = {0}) as increasing k beyond this point yields diminishing reductions in inertia, so additional clusters likely add little explanatory value. Proceed with k = {0} and validate clusters by examining cluster sizes, decoded centers, and domain relevance.